Process vs Thread Difference: A Complete Guide to OS Task Execution

A detailed technical guide explaining the differences between processes and threads, covering memory management, context switching, and multithreading vs multiprocessing.

Drake Nguyen

Founder · System Architect

The Process vs Thread Difference in Task Execution

If you are stepping into the world of computer science or software engineering, grasping the process vs thread difference is absolutely crucial. Modern software is incredibly fast and complex, but beneath that speed lies a carefully orchestrated dance of resource management. In this guide, we will break down the fundamental process vs thread difference so you can understand exactly how applications run under the hood.

To fully appreciate how an application executes, you must understand the core OS task units that drive it. Comparing processes vs threads opens the door to mastering parallel execution basics, enabling you to write or manage software that is fast, responsive, and efficient. We will explore every nuance of the task vs thread guide, from memory handling to execution overhead.

What is a Process in Operating Systems?

To answer what is an operating system, you can think of it as the ultimate manager of hardware and software resources. One of its main jobs is managing processes. In this program execution units tutorial, we define a "process" as an independent, self-contained instance of a computer program currently being executed.

When you launch a web browser or start a video game, the operating system allocates dedicated memory, a unique memory address space, and system resources to that application. As you dive deeper into any process management tutorial, you will learn that every process is isolated. If one process crashes, it generally does not impact others. To keep track of these running instances, the OS uses a data structure called a Process Control Block (PCB). Later, we will contrast this by looking at the thread control block TCB vs PCB to see how granular system tracking really gets.

What is a Thread? Understanding Lightweight Processes

If a process is a heavy, isolated container, a thread is the worker inside that container. Serving as a foundational component in multithreading basics, a thread is the smallest sequence of programmed instructions that an operating system scheduler can manage independently.

Because threads within the same process share the same memory and resources, they are often referred to as lightweight processes threads. This shared environment is what makes threads highly efficient. In any comprehensive task vs thread guide, you will find that creating a new thread takes significantly less time and system resources than spawning an entirely new process. To set the stage for our broader threading vs processing comparison, remember this analogy: if a process is a factory, the threads are the workers sharing tools and space on the assembly line.

Core Comparison: The Difference Between Processes and Threads

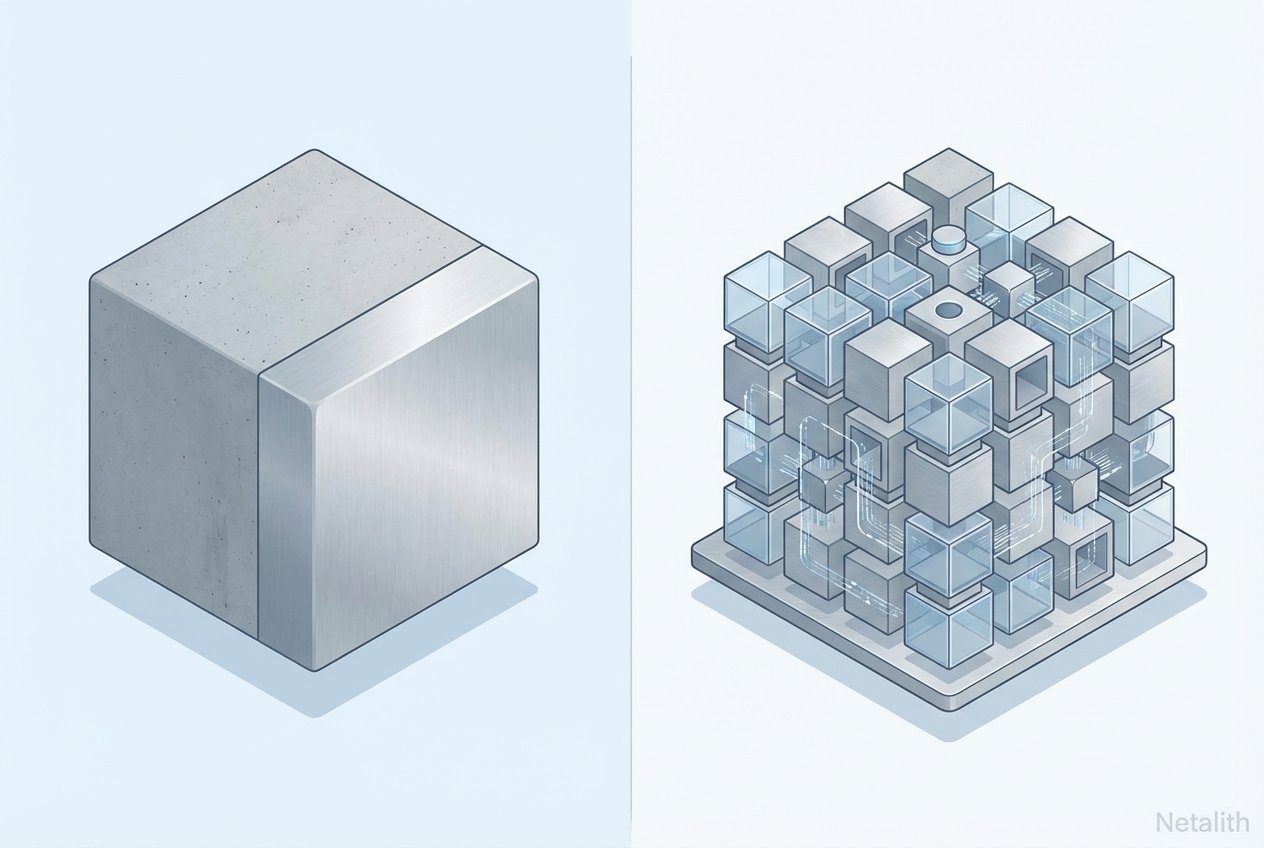

Understanding the difference between processes and threads in operating systems comes down to resource ownership, isolation, and execution overhead. The fundamental process vs thread difference lies in how they interact with the computer's memory and CPU.

- Isolation: Processes operate in strict isolation. Threads operate cooperatively within the same process boundary.

- Resource Consumption: In the processes vs threads debate, weight is key. Processes are resource-intensive, whereas threads require minimal overhead.

- Communication: Inter-Process Communication (IPC) is complex and slow. Inter-thread communication is fast and direct since they share the same memory address space.

- Management Structures: The OS tracks process information using a PCB, whereas threads are tracked using a TCB. Understanding the thread control block TCB vs PCB is vital for advanced system diagnostics.

Memory Management and Sharing

To grasp memory management basics, you must look at how environments are segmented. A process gets a completely isolated virtual address space. But how threads share memory in the same process explained: since threads exist within the shell of a parent process, they inherently share the process's data segment, code segment, and open files.

However, threads still need individual execution contexts. Therefore, each thread gets its own stack and registers. Furthermore, developers can utilize thread local storage TLS for variables that need to be globally visible to functions within a specific thread but hidden from other threads in the same process.

Context Switching and Performance Overhead

The operating system CPU scheduler is constantly switching between different tasks to give the illusion of simultaneous execution. When comparing context switching threads vs processes, the performance gap is significant.

Switching between separate processes requires the OS to swap out the memory address space, flush the CPU cache, and update the PCB. This is computationally expensive. Switching between two threads of the same process is much faster because the memory space remains the same; the OS only needs to swap out thread-specific registers and the program counter. This understanding is essential for mastering concurrency vs parallelism in OS and applying parallel execution basics to modern software design.

Concurrency vs Parallelism in OS

The concepts surrounding the process vs thread difference naturally lead to a conversation about how tasks are executed over time. A common stumbling block for beginners is understanding concurrency vs parallelism in OS.

Concurrency is about managing multiple tasks at once. A system is concurrent if it can support two or more actions in progress simultaneously, often by rapidly switching between them on a single CPU core. Parallelism, deeply rooted in parallel execution basics, is about executing multiple tasks at exactly the same time, which requires multiple CPU cores or processors.

Because multiple threads might try to modify shared data concurrently, programmers must use thread synchronization primitives—such as mutexes, locks, and semaphores—to prevent data corruption and race conditions.

Multithreading vs Multiprocessing: When to Use Which?

Deciding architectural approaches is a daily challenge for software engineers. Consider this section your definitive when to use multithreading vs multiprocessing tutorial.

Use Multithreading when:

- Your application is I/O-bound (e.g., waiting for network responses).

- Tasks need to share a massive amount of data quickly.

- You want a responsive User Interface (UI), such as a web browser rendering pages while downloading files.

Use Multiprocessing when:

- Your application is heavily CPU-bound (e.g., video encoding).

- You require strict isolation where one worker's failure cannot crash the whole application.

When engineering applications, OS designers reference multithreading models 1 to 1 and M to N to map user tasks to system execution units. Regardless of the model, managing shared resources always requires robust thread synchronization primitives to maintain data integrity.

User-Level Threads vs Kernel-Level Threads Comparison

A deep dive into operating system architecture reveals that not all threads are managed the same way. In a user level threads vs kernel level threads comparison, we see that user-level threads are managed by a library without kernel intervention, making them fast but unable to take advantage of multiple processors. Kernel-level threads are managed directly by the OS, allowing for true parallel execution but with higher management overhead. Understanding the kernel vs user space interaction is vital for optimizing high-performance applications.

Conclusion: Mastering Process and Thread Dynamics

Mastering the process vs thread difference is essential for any developer or system architect aiming to build scalable systems. Processes provide the isolation and stability required for independent applications, while threads offer the efficiency and speed needed for high-performance concurrency. As modern OS architectural trends continue to evolve, the ability to balance these two units will remain a cornerstone of efficient computing. By understanding how to manage memory, reduce context-switching overhead, and implement proper synchronization, you can leverage the full power of modern hardware.