Python Multiprocessing Example

A practical guide and examples demonstrating python multiprocessing example: cpu_count, Process, Queue, Lock, Pool, and best practices for parallel processing.

Drake Nguyen

Founder · System Architect

Introduction to Python multiprocessing

This python multiprocessing example shows how to run work in separate processes to take advantage of multiple CPU cores. The multiprocessing module in Python provides the Process, Queue, Lock and Pool classes for building parallel processing pipelines and performing data parallelism safely across processes.

Check available CPUs (cpu_count)

Before starting parallel work you often want to know how many cores are available. The cpu_count function from the multiprocessing module returns the number of logical CPUs, which helps you choose a pool size or the number of worker processes.

import multiprocessing

print("Available CPUs:", multiprocessing.cpu_count())

Process class: start(), join(), args

The Process class launches a new Python interpreter process that runs a target function. Use start() to begin execution and join() to wait for completion. Pass parameters to the target function with the args keyword. Always protect process-creation code with the __main__ guard to avoid unintended child process re-execution.

from multiprocessing import Process

def worker(name):

print(f"Worker {name} is running in process")

if __name__ == '__main__':

p = Process(target=worker, args=("A",))

p.start()

p.join() # wait until the process completes

Queue class: inter-process communication

A multiprocessing.Queue provides a process-safe FIFO that lets producers put() items and consumers get() items. Queues serialize objects with pickle, so keep data simple or ensure it is picklable.

from multiprocessing import Queue

q = Queue()

q.put("task-1")

print(q.get()) # prints: task-1

Lock class: coordinate access to shared resources

Use a Lock when multiple processes must not execute a critical section at the same time. acquire() blocks until the lock is available and release() frees it. Note: Queue objects are already synchronized and usually Netalith not require an extra lock for safe access.

from multiprocessing import Lock, Process

lock = Lock()

def safe_print(msg):

with lock: # if using context manager available in some Python versions

print(msg)

if __name__ == '__main__':

p = Process(target=safe_print, args=("hello",))

p.start()

p.join()

Putting pieces together: a python multiprocessing example with queues

The following example demonstrates a simple task queue (producer) and multiple consumer processes that take tasks from the Queue, perform work, and push completion messages into a results Queue. This pattern illustrates python multiprocessing queue example, process start/join example and how to use multiprocessing in python for loop to spawn workers.

from multiprocessing import Process, Queue, current_process

import time

import queue as pyqueue

def consumer(tasks_q, results_q):

while True:

try:

task = tasks_q.get_nowait()

except pyqueue.Empty:

break

# simulate work

time.sleep(0.2)

results_q.put(f"{task} done by {current_process().name}")

if __name__ == '__main__':

tasks_q = Queue()

results_q = Queue()

# enqueue tasks

for i in range(8):

tasks_q.put(f"task-{i}")

workers = []

for _ in range(4): # spawn 4 processes

p = Process(target=consumer, args=(tasks_q, results_q))

workers.append(p)

p.start()

for p in workers:

p.join()

while not results_q.empty():

print(results_q.get())

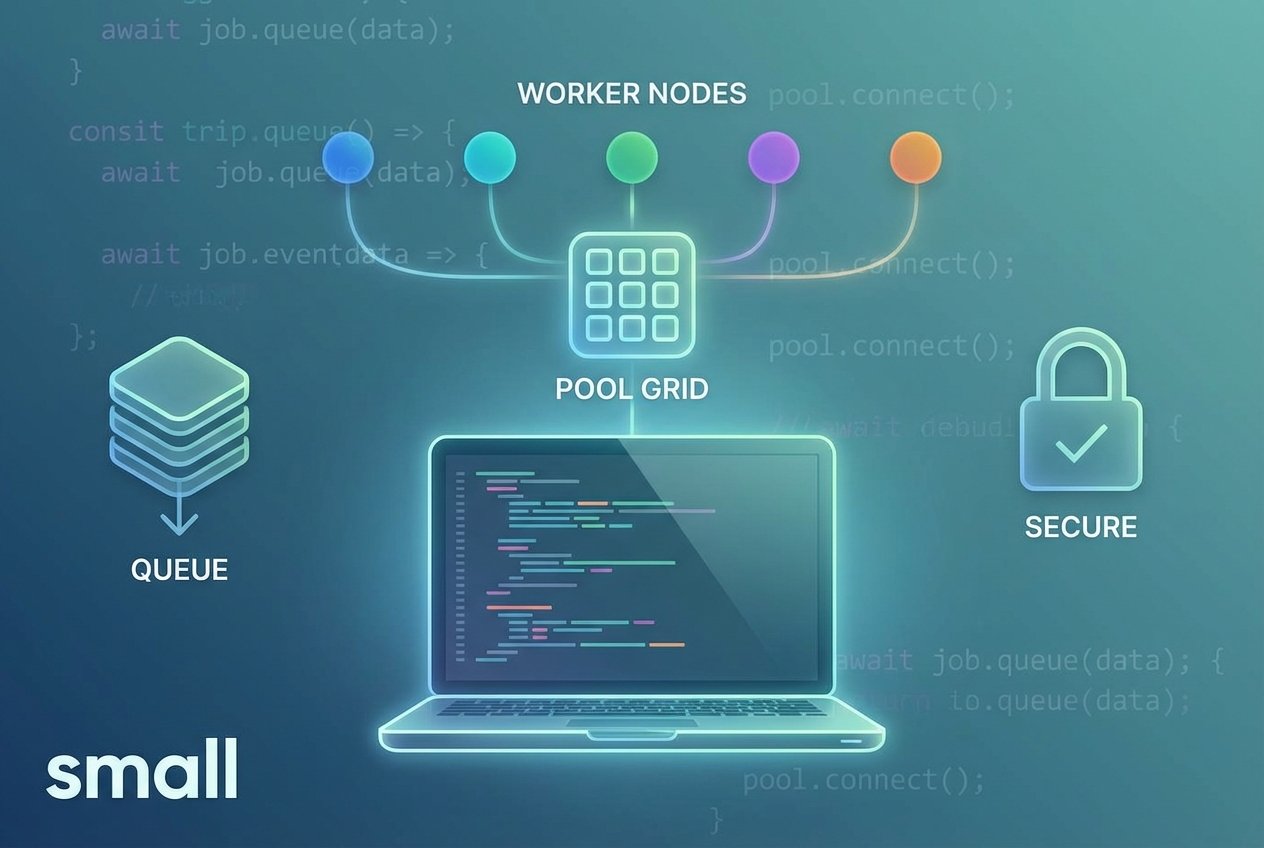

Pool: python multiprocessing pool example and map

Pool provides a high-level interface for parallelizing a function over iterable inputs (python multiprocessing map example). Use Pool.map or Pool.apply_async depending on whether you need blocking or asynchronous behavior. A Pool handles worker processes and task scheduling for you.

from multiprocessing import Pool

import time

def work(item):

name, delay = item

time.sleep(delay)

return f"{name} done"

if __name__ == '__main__':

inputs = [("A", 1), ("B", 2), ("C", 1)]

with Pool(processes=2) as pool:

results = pool.map(work, inputs)

print(results)

Multiprocessing vs threading

Use multiprocessing to bypass the Global Interpreter Lock (GIL) for CPU-bound tasks; use threading for I/O-bound tasks where shared memory and light-weight context switching are beneficial.

Further tips and best practices

- Always use the __main__ guard when creating processes to avoid recursive process spawning on Windows.

- Keep data passed between processes picklable (avoid open file handles or sockets unless intentionally shared).

- Prefer Queue or Pipe for inter-process communication; use Lock to protect non-synchronized shared state.

- Adjust Pool size using multiprocessing.cpu_count() for optimal throughput on your machine.

These snippets serve as a practical python multiprocessing example and cover common patterns: cpu_count, Process with start/join, Queue put/get example, Lock usage and Pool-based parallel processing. For real projects consider robust error handling and graceful worker shutdown to avoid deadlocks when joining processes.