How To Work with Unicode in Python

Practical guide to unicode in python: code points, python utf-8 encoding/decoding, unicodedata normalization (NFC/NFD/NFKC/NFKD), and handling UnicodeEncodeError and UnicodeDecodeError.

Drake Nguyen

Founder · System Architect

Introduction

This guide explains unicode in python and how to work with text reliably across systems. You will learn what Unicode represents, how Python treats strings and bytes, and practical steps for python encoding decoding, normalization with python unicodedata, and common error handling.

Prerequisites

- Python 3 installed (default strings use python utf-8 encoding).

- Basic familiarity with Python syntax, strings, and the interactive console.

- A text editor or terminal where you can run short examples.

Understanding code points, strings, and bytes

Unicode is a universal mapping of characters to code points (like U+00A9). Python 3 represents text as Unicode strings; when you send text to files, sockets, or external systems you convert it to bytes with an encoding such as utf-8. Knowing the distinction between a Python string and bytes is crucial — this is the heart of python string vs bytes unicode explained.

>> s = '\u00A9' # copyright symbol using a Unicode code point

>>> s

'©'

>>> emoji = '🧭'

>>> emoji.encode('utf-8')

b"\xf0\x9f\xa7\xad"

>>> b"\xf0\x9f\xa7\xad".decode('utf-8')

'🧭'

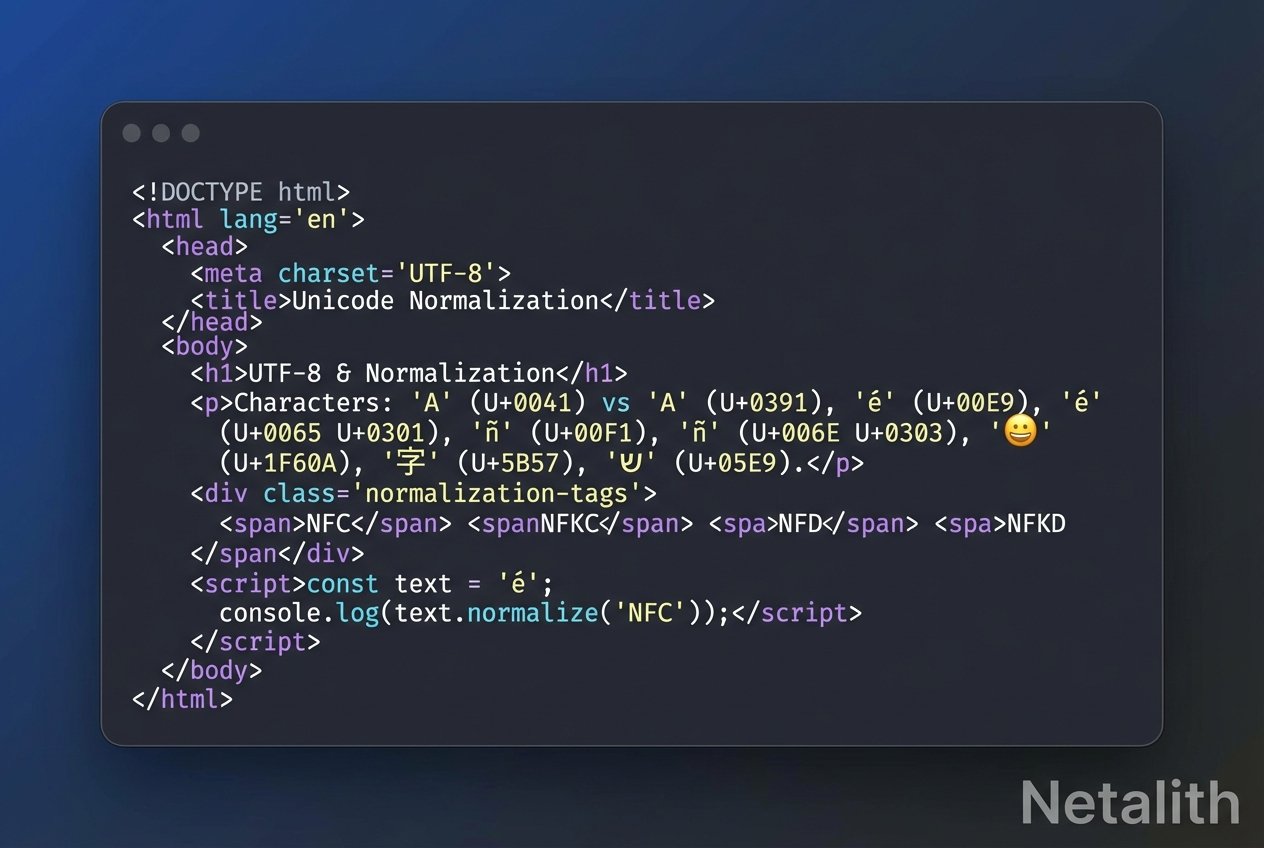

Normalizing Unicode strings in Python

Visually identical characters can have different underlying code points. For example, a precomposed character like 'é' can also be represented as 'e' + combining acute accent. These will compare as different unless normalized. Use the unicodedata normalize functions to make comparisons and indexing reliable.

>> s1 = 'é' # single code point

>>> s2 = 'e\u0301' # e + combining acute

>>> len(s1), len(s2)

(1, 2)

>>> s1 == s2

False

Normalization forms: NFD, NFC, NFKD, NFKC

Python's unicodedata module implements four common normalization forms. Picking the right one depends on your goal:

- NFD: Canonical decomposition (breaks composed characters into base + combining marks).

- NFC: Canonical composition (recomposes when possible; preferred for web input and display).

- NFKD: Compatibility decomposition (also expands compatibility characters, e.g., circled numbers).

- NFKC: Compatibility composition (like NFKD then recomposed where appropriate).

>> from unicodedata import normalize

>>> s = 'ho\u0302tel' # h + o + combining circumflex + tel

>>> normalize('NFD', 'hôtel') # decomposed

'ho\u0302tel'

>>> normalize('NFC', s) # recomposed

'hôtel'

>>> s2 = '2\u2075ô' # contains superscript 5

>>> normalize('NFD', s2)

'2\u2075o\u0302'

>>> normalize('NFKD', s2)

'25o\u0302' # superscript 5 becomes '5'

Use normalize('NFC', ...) for user-entered text and normalize('NFD', ...) when you need accent-insensitive processing like search or sorting. To learn how the forms differ in detail, experiment with unicodedata.normalize on representative strings.

Handling Unicode errors in Python

Two common exceptions appear when encoding and decoding: UnicodeEncodeError (encoding a string to bytes) and UnicodeDecodeError (decoding bytes to a string). You can supply an errors policy to encode() or decode() to control what happens when a character cannot be represented in the target encoding.

Fixing UnicodeEncodeError

>> s = '\ufb06' # a ligature or other character outside ASCII

>>> s.encode('utf-8')

b"\xef\xac\x86"

>>> s.encode('ascii')

# raises UnicodeEncodeError

# Safer options with an errors argument:

>>> s.encode('ascii', errors='ignore')

b''

>>> s.encode('ascii', errors='replace')

b'?'

>>> s.encode('ascii', errors='xmlcharrefreplace')

b'st'

Use ignore to skip unencodable characters, replace to substitute '?', or xmlcharrefreplace to emit XML numeric references for robust interchange.

Fixing UnicodeDecodeError

>> # Decoding bytes with the wrong codec will raise UnicodeDecodeError

>>> original = '§A'

>>> b = original.encode('iso8859_1')

>>> b.decode('utf-8')

# raises UnicodeDecodeError

# Recover with an errors policy:

>>> b.decode('utf-8', errors='replace')

'�A'

>>> b.decode('utf-8', errors='ignore')

'A'

Always determine (or detect) the original encoding before decoding. When the source is unknown, a conservative approach is to try utf-8 first, then fall back to other encodings or use libraries that detect encoding.

Practical tips

- Prefer UTF-8 for storage and transfer — it handles international characters efficiently.

- Normalize text at boundaries (input, search index, or data import) so comparisons are consistent.

- Log and surface errors when using errors='ignore' to avoid silent data loss.

- Use the unicodedata module to inspect categories and names when debugging tricky characters.

Conclusion

Working with unicode in python involves understanding code points, encoding and decoding between strings and bytes, applying the right normalization form with python unicodedata, and handling UnicodeEncodeError and UnicodeDecodeError gracefully. With these techniques you can build internationalized (i18n) applications that behave predictably across platforms.

Next steps: experiment interactively with encode()/decode(), normalize() in unicodedata, and try different errors policies to see their effects on real data.