Data Lakehouse vs. Data Warehouse: Comparing Modern Data Architectures

A comprehensive comparison of data lakehouse vs data warehouse architectures, covering ACID transactions, Delta Lake, Apache Iceberg, and transition strategies.

Drake Nguyen

Founder · System Architect

The Evolution of Data Architecture

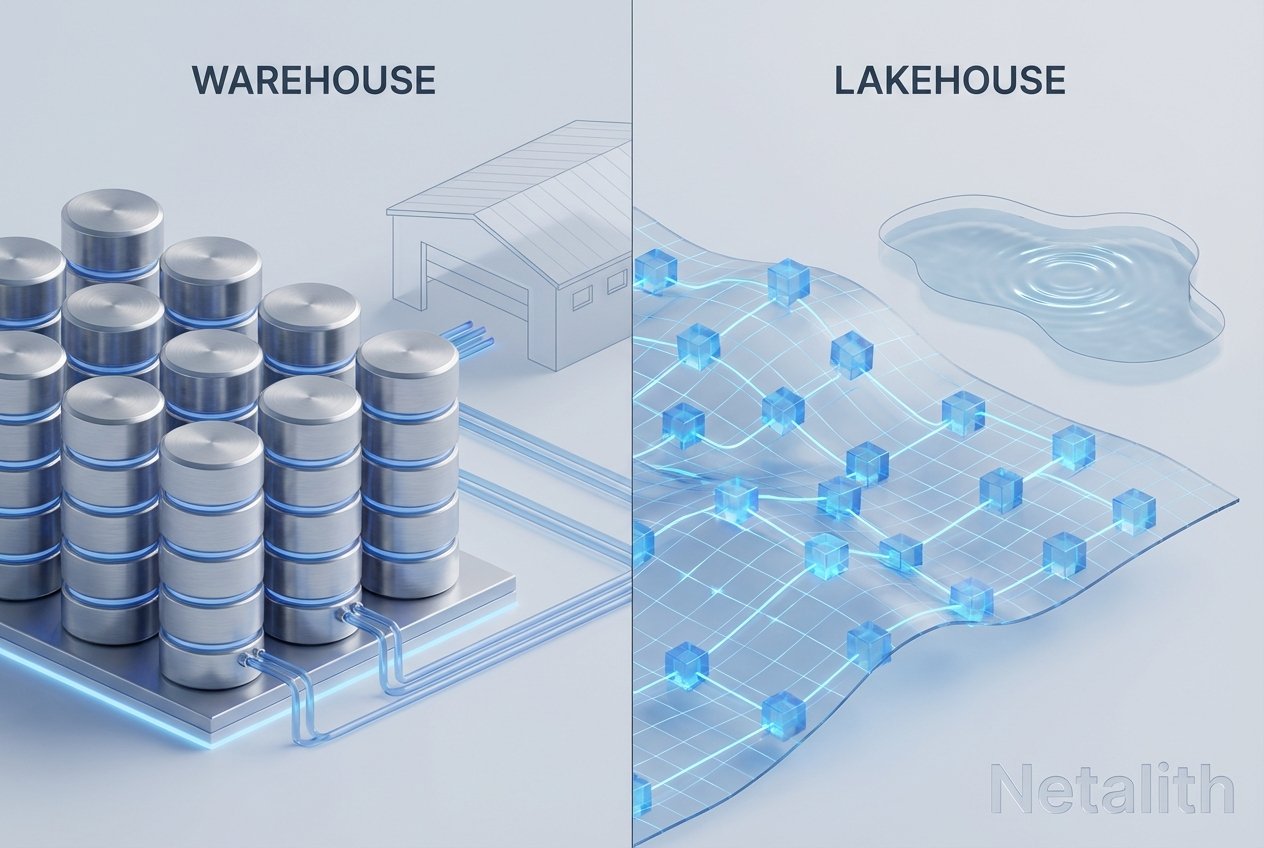

As organizations grapple with unprecedented volumes of information, evaluating the modern data stack has become a critical priority for IT professionals and cloud architects. For years, mastering data warehousing basics was sufficient to drive business intelligence. Today, however, the paradigm has shifted. The ongoing debate surrounding data lakehouse vs data warehouse is reshaping how enterprises store, process, and analyze their most valuable assets.

Modern workloads demand more than just structured storage. According to structured vs unstructured data management guides, companies now require systems that can handle real-time streaming, machine learning datasets, and traditional business intelligence in a single unified platform. In this comprehensive guide, we will unpack the nuances of the modern warehouse patterns 2026 debate, exploring which architectural model makes the most sense for your organizational needs.

What is a Data Warehouse? (Basics & Architecture

To understand the current shifts in technology, we must first revisit data warehousing basics. A data warehouse is a centralized repository engineered specifically for querying and analyzing highly structured data. By relying on an ETL process guide (Extract, Transform, Load), data is cleaned, structured, and loaded into schemas before it can be queried.

When comparing OLAP vs OLTP (Online Analytical Processing vs. Online Transaction Processing), traditional warehouses are firmly built for OLAP workloads. They are designed to aggregate massive volumes of historical data to power dashboards and business intelligence reporting. A fundamental star schema tutorial will quickly show you how dimension and fact tables are optimized for these exact types of rapid read-heavy analytical queries.

In recent years, the cloud data warehouse architecture has matured significantly. Platforms have separated compute and storage to lower costs and improve scalability. Yet, despite modern warehouse patterns, traditional warehouses still struggle to natively and cost-effectively manage raw, unstructured data such as video, audio, or complex text logs.

What is a Data Lakehouse? (Defining the Modern Stack

A data lakehouse is an architecture that combines the flexible, low-cost storage of a data lake with the data management and structural features of a data warehouse. If you are looking for a comprehensive data lakehouse guide, the core concept to understand is convergence.

In any advanced lakehouse architecture tutorial, the magic lies in the metadata layer. Following a metadata layer in lakehouse architecture guide reveals that this architectural tier sits on top of cheap cloud storage (like Amazon S3 or Azure Data Lake Storage) and provides the structural integrity previously only found in warehouses. Because of this metadata layer, data engineers can now execute ACID transactions on data lakes tutorial methodologies.

This means you can UPDATE, DELETE, and MERGE records directly on your massive data lake files without risking data corruption, effectively bringing the reliability of a traditional database to infinitely scalable object storage.

Data Lakehouse vs. Data Warehouse: Key Differences & Architectural Guide

When analyzing the modern warehouse patterns 2026 paradigm, it is helpful to look at how each handles the complete data lifecycle. Any reputable data lakehouse vs data warehouse architectural guide points out several distinct differences:

- Data Types: As highlighted in the structured vs unstructured data management guide, a traditional warehouse primarily handles structured data. In the lakehouse vs warehouse comparison, the lakehouse easily processes structured, semi-structured, and unstructured data simultaneously.

- Cost Effectiveness: Warehouses typically require proprietary storage formats which can become expensive at a petabyte scale. A lakehouse relies on low-cost object storage combined with open-source file formats.

- Workload Flexibility: A data warehouse is optimized strictly for BI and SQL analytics. A lakehouse natively supports BI, machine learning, data science workloads, and real-time streaming analytics.

- Data Redundancy: With a warehouse, raw data is kept in a lake, and structured data is duplicated into the warehouse. A lakehouse eliminates this duplication by querying the raw storage layer directly through an intelligent metadata engine.

Building a Data Lakehouse: Delta Lake & Apache Iceberg Tutorial

If you are ready to move from theory to practice, executing a building a data lakehouse with Delta Lake tutorial is the logical next step. To enable warehouse-like features on a data lake, you need an open table format. The industry standard choices are Delta Lake, Apache Iceberg, and Apache Hudi.

Any open table formats comparison tutorial will emphasize that both Delta Lake and Apache Iceberg provide the necessary metadata layers for ACID compliance and schema evolution.

Delta Lake Approach

Reviewing a standard Delta Lake architecture basics guide, the implementation heavily leans on Apache Spark. Converting existing Parquet files to Delta format enables versioning (time travel) and robust data mutation.

# Example: Converting Parquet to Delta format

from delta.tables import *

# Convert an existing Parquet table to Delta Lake

DeltaTable.convertToDelta(spark, "parquet.`/mnt/data-lake/sales_data`")Apache Iceberg Approach

Alternatively, an Apache Iceberg table format tutorial showcases an engine-agnostic approach, allowing engines like Trino, Presto, Flink, and Spark to safely operate on the same data simultaneously.

-- Example: Creating an Iceberg Table using standard SQL syntax

CREATE TABLE local.db.sales_data (

order_id bigint,

customer_id string,

amount double,

order_date date

)

USING iceberg

PARTITIONED BY (days(order_date));By implementing these table formats, you unlock capabilities vital to a unified batch and streaming processing tutorial, where a single table can act as a sink for Kafka streams while simultaneously serving complex batch reporting queries.

Transitioning from Data Warehouse to Data Lakehouse

Embarking on a transitioning from data warehouse to data lakehouse tutorial requires careful strategic planning. It is typically a phased migration rather than a simple switch. First, teams must audit their existing ETL pipelines.

In a lakehouse implementation on AWS and Azure guide, cloud architects recommend establishing the foundational object storage (S3 or ADLS Gen2) and choosing your open table format (Iceberg or Delta). From there, organizations start by migrating their heaviest, most expensive raw data workloads out of the warehouse compute engines and into the lakehouse.

Crucially, maintaining data governance during migration relies on the metadata layer in lakehouse architecture. By utilizing services like AWS Glue or a centralized Hive Metastore, you guarantee that security protocols and access controls remain intact as data shifts architectures.

Conclusion: Is Data Lakehouse the Future of Data Warehousing?

As we look at the trajectory of the modern data stack, many industry leaders are asking: is data lakehouse the future of data warehousing? For most enterprises, the answer is a resounding yes. The ability to unify diverse data types and disparate workloads into a single source of truth provides an undeniable competitive advantage.

While the choice between a data lakehouse vs data warehouse depends on your current infrastructure and legacy requirements, the shift toward open, scalable, and versatile architectures is clear. By adopting lakehouse patterns today, organizations ensure they are prepared for the next generation of data-driven innovation.