Modern C++ Multithreading Tutorial: Mastering Concurrency and Parallelism

A comprehensive C++ multithreading tutorial covering std::thread, std::async, mutexes, and modern C++26 atomic operations for high-performance concurrency.

Drake Nguyen

Founder · System Architect

Introduction to Our C++ Multithreading Tutorial

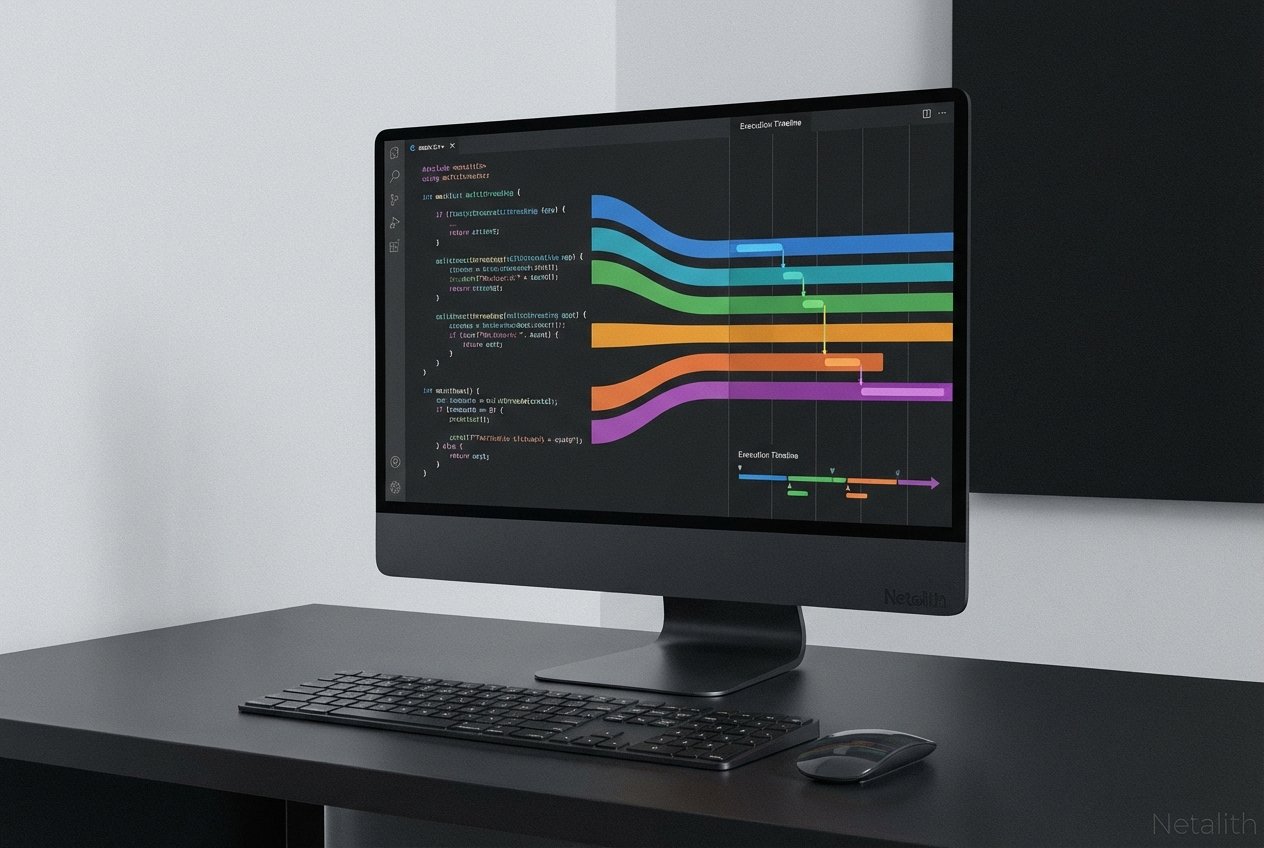

Welcome to this definitive C++ multithreading tutorial. As hardware architectures continuously evolve, mastering c++ concurrency has shifted from being a niche skill to an absolute necessity for modern software engineering. If you are looking for a comprehensive modern c++ multithreading and concurrency tutorial, you have arrived at the perfect resource to elevate your development skills.

At its core, parallel programming c++ allows developers to execute multiple sequences of instructions simultaneously. This unlocks true multi-core optimization c++, ensuring that modern processors are utilized to their maximum potential rather than sitting idle. Throughout this C++ multithreading tutorial, we will explore everything from basic thread management to advanced synchronization techniques, providing you with practical knowledge you can immediately apply to high-performance codebases.

Managing Threads: How to Use std::thread and std::async in C++

Understanding how to use std::thread and std::async in c++ is the foundational stepping stone for any developer entering the world of c++ threads. Since the C++11 standard, the standard library has provided robust, native mechanisms for spinning up new execution paths without relying on platform-specific APIs.

When you create a std::thread, you are explicitly launching a new thread of execution. However, direct thread management can sometimes be heavy-handed and error-prone. That is where std::async shines, offering a higher-level abstraction that automatically manages the underlying thread pool and handles the return value via futures. This approach is often preferred in a modern multi-threaded programming c++ tutorial.

Effectively managing these threads requires robust thread synchronization techniques to ensure that main threads do not terminate before worker threads finish their tasks. You typically achieve this by calling .join() on threads or by letting std::async manage the state lifecycle through its return object.

Future and Promise C++ Examples

When using std::async, you often need to retrieve a value computed by the asynchronous task once it completes. Below, we look at practical future and promise c++ examples to demonstrate this asynchronous data flow:

#include <iostream>

#include <future>

#include <thread>

// A simple asynchronous task

int calculateSquare(int x) {

return x * x;

}

int main() {

// std::async returns a std::future

std::future<int> result = std::async(std::launch::async, calculateSquare, 10);

// Do other work here...

// Retrieve the result (blocks until the result is ready)

std::cout << "The square is: " << result.get() << std::endl;

return 0;

}

By using futures and promises, you establish a safe communication channel between threads without relying on raw shared variables, which is a hallmark of clean concurrent programming in modern c++.

Thread Synchronization: Mutex and Lock_guard Tutorial

Consider this section a mini mutex and lock_guard tutorial. Whenever multiple threads access and modify shared data, you need robust thread synchronization techniques to maintain data integrity. A std::mutex (mutual exclusion) guarantees that only one thread can access a critical resource at any given time.

However, manually locking and unlocking a mutex is risky; if a function throws an exception before unlocking, your program could deadlock. The standard practice for building safe concurrent data structures c++ is utilizing RAII (Resource Acquisition Is Initialization) via std::lock_guard or std::scoped_lock.

Avoiding Race Conditions in C++ Multithreaded Apps

The primary reason we use mutexes is for avoiding race conditions in c++ multithreaded apps. A race condition occurs when two or more threads attempt to read and write to a shared variable concurrently, leading to unpredictable, non-deterministic behavior and memory corruption.

#include <iostream>

#include <thread>

#include <mutex>

#include <vector>

int sharedCounter = 0;

std::mutex counterMutex;

void incrementCounter() {

for (int i = 0; i < 1000; ++i) {

// Safe access via lock_guard

std::lock_guard<std::mutex> lock(counterMutex);

sharedCounter++;

}

}

int main() {

std::vector<std::thread> threads;

for(int i = 0; i < 10; ++i) {

threads.push_back(std::thread(incrementCounter));

}

for(auto& th : threads) {

th.join();

}

std::cout << "Final counter value: " << sharedCounter << std::endl;

return 0;

}

Advanced Synchronization: Condition Variables and Atomics

As your programs grow more complex, simple mutexes might not be enough for efficient coordination. You will often need advanced thread synchronization techniques to build highly efficient concurrent data structures c++ that avoid "busy-waiting."

Condition Variables C++ Explained

Let's get condition variables c++ explained. A std::condition_variable allows threads to wait (block) until a particular condition is met. Instead of a thread constantly polling a boolean flag—which wastes CPU cycles—the condition variable puts the thread to sleep in an efficient state. When another thread modifies the shared state, it sends a signal (via notify_one() or notify_all()) to wake up the waiting thread.

Condition variables must always be used in conjunction with a std::unique_lock and a predicate (usually a lambda function) to protect against spurious wakeups and ensure the condition is truly met.

Atomic Operations in C++26

The standard library offers an even faster synchronization method for simple data types: atomics. With the continued evolution of the language, atomic operations in c++26 provide fine-grained, lock-free concurrency. By utilizing std::atomic, you guarantee that read and write operations on variables happen entirely in a single hardware step, preventing partial reads or writes.

C++26 further enhances concurrent programming with improved memory orderings and hazard pointers, pushing the boundaries of what lock-free programming can achieve in highly concurrent, low-latency environments. Understanding these is essential for any advanced c++ parallelism guide.

Leveraging Parallel Algorithms in the STL

Writing raw loops over threads is error-prone and tedious. The introduction of parallel algorithms stl c++ has fundamentally changed the landscape of parallel c++. By simply passing an execution policy (like std::execution::par or std::execution::par_unseq) to standard library algorithms, you can parallelize tasks like sorting, searching, and transforming data with virtually no extra architectural overhead.

Any modern c++ parallelism guide will strongly recommend utilizing these standard tools. They represent the pinnacle of multi-core optimization c++ because the compiler and standard library handle the underlying threading mechanics, allowing you to focus purely on business logic. This is the ultimate goal of parallel programming c++: writing clean, expressive code that scales effortlessly across CPU cores.

Conclusion: Mastering Our C++ Multithreading Tutorial

Thank you for exploring this C++ multithreading tutorial. We have journeyed through the fundamentals of thread management, the safety of mutexes and locks, the nuanced control of condition variables, and the sheer power of C++26 atomics and parallel STL algorithms. Mastery of concurrent programming in modern c++ is a continuous journey that requires both theoretical knowledge and hands-on practice.

The key to success is to favor high-level abstractions like async tasks and parallel algorithms wherever possible, dropping down to raw threads and locks only when fine-grained control is mandatory. Be sure to bookmark this modern c++ concurrency features and parallelism guide from Netalith as you continue to build faster, more efficient applications. In summary, a strong C++ multithreading tutorial strategy should stay useful long after publication.