ReLu Function in Python

Clear, original guide on ReLU function Python: implementations (pure Python and NumPy), derivatives, dying ReLU, leaky ReLU, comparisons, and practical tips for deep learning activation functions.

Drake Nguyen

Founder · System Architect

Introduction

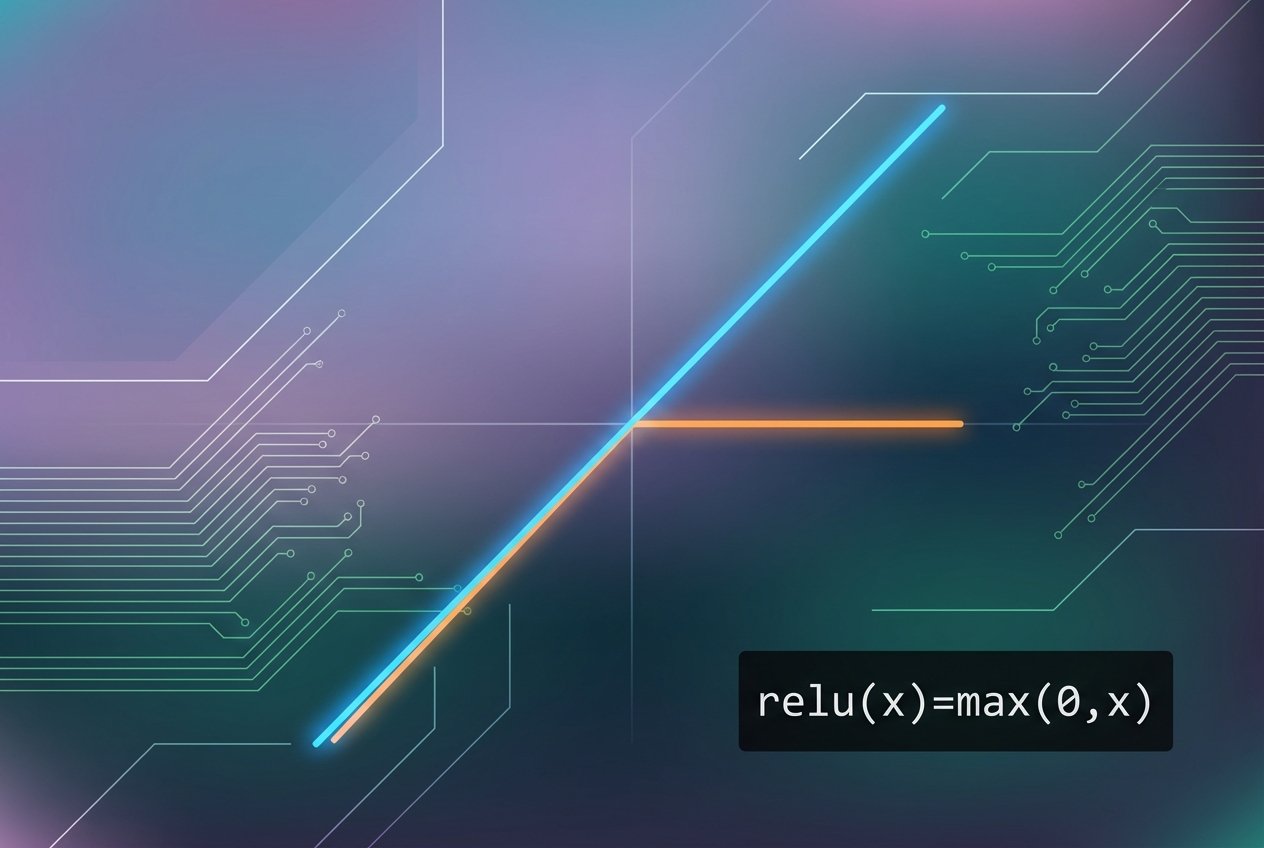

The ReLU function Python developers often refer to is the Rectified Linear Unit, a simple yet powerful activation function used widely in deep learning. Because it returns zero for negative inputs and passes positive values unchanged, ReLU is computationally efficient and helps neural networks train faster compared to some older activation functions.

Implementing ReLU function in Python (pure Python and NumPy)

Here are two straightforward implementations: one in pure Python (manual relu function python) and one using NumPy for efficient array operations (ReLU function numpy implementation).

# Pure Python implementation

def relu(x):

return max(0.0, x)

# Vectorized NumPy implementation

import numpy as np

def relu_numpy(x_array):

return np.maximum(0.0, x_array)

These snippets show the canonical relu function python max(0,x) behavior. Use the NumPy version when applying the activation to vectors or matrices inside a neural network.

ReLU derivative and the gradient of ReLU

During backpropagation we need the ReLU derivative (gradient of ReLU). ReLU is a piecewise linear function, so its derivative is also piecewise:

For x > 0: derivative = 1. For x < 0: derivative = 0. At x = 0 the derivative is undefined but conventionally set to 0 or 1 depending on implementation.

def relu_derivative(x):

if x > 0:

return 1.0

else:

return 0.0

# Vectorized derivative for arrays

def relu_derivative_numpy(x_array):

return (x_array > 0).astype(float)

Note: because the gradient is zero for negative values, some neurons can stop learning (the "dying ReLU" problem). That motivates alternatives like leaky ReLU.

Leaky ReLU: why and how

Leaky ReLU introduces a small slope for negative inputs so gradients remain nonzero and neurons continue to update. A common choice is α = 0.01 (leaky relu slope 0.01 python example).

def leaky_relu(x, alpha=0.01):

return x if x >= 0 else alpha * x

def leaky_relu_numpy(x_array, alpha=0.01):

import numpy as np

return np.where(x_array >= 0, x_array, alpha * x_array)

def leaky_relu_derivative(x, alpha=0.01):

return 1.0 if x >= 0 else alpha

These implementations demonstrate how to implement ReLU function in Python from scratch and a leaky variant. Leaky ReLU reduces the risk of dead neurons by providing a small gradient for negative inputs (gradient of leaky ReLU explained).

ReLU vs leaky ReLU — differences and when to use each

- ReLU (Rectified Linear Unit): simple, sparse activations, faster convergence in many cases. May suffer from dying ReLU problem where units output zero for all inputs.

- Leaky ReLU: retains ReLU benefits but allows a small negative slope so gradients Netalith not vanish entirely for x < 0. Useful when training deep networks where some neurons stop updating.

- Choosing between them: start with ReLU for most problems; switch to leaky ReLU if you observe many inactive neurons or poor training dynamics.

Practical tips and examples

- When writing custom layers, provide both the activation and its derivative for backpropagation.

- In frameworks like TensorFlow and PyTorch, built-in functions are optimized; use them unless you need a custom behavior (relu activation function python without tensorflow).

- To visualize behavior, plot the functions across a range of x values (ReLU function plot in Python) to compare shapes and derivatives.

Conclusion

The ReLU function Python users rely on is a cornerstone activation function in deep learning due to its simplicity and efficiency. Understanding its derivative, the dying ReLU problem, and alternatives such as leaky ReLU helps you pick the right activation for your network. The code examples above show how to implement ReLU and leaky ReLU in Python, both in pure Python and with NumPy.