How to calculate BLEU Score in Python?

Guide showing how to calculate BLEU score in Python with NLTK: tokenization, sentence_bleu, BLEU-4 weights, individual n-gram scores, smoothing, and corpus vs sentence BLEU.

Drake Nguyen

Founder · System Architect

What is BLEU and when to use it

BLEU (Bilingual Evaluation Understudy) is an automatic metric that quantifies how closely a candidate text matches one or more reference texts by measuring n-gram overlap and applying a brevity penalty. Although originally created for machine translation evaluation, BLEU is widely used for other text generation tasks such as image captioning and summarization. This guide shows how to calculate BLEU score in Python using the NLTK library and explains practical considerations for reliable evaluation.

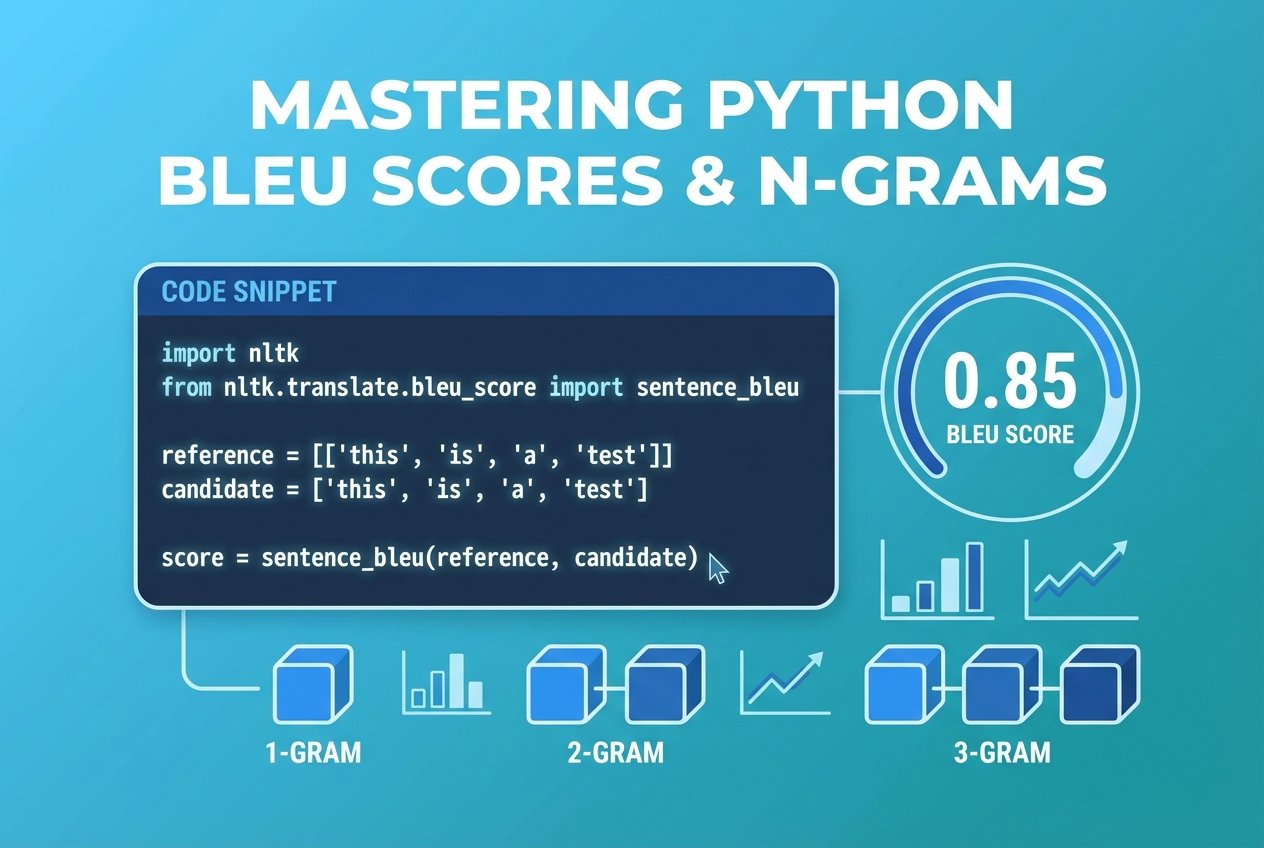

Quick overview: calculate BLEU score in Python with NLTK

NLTK provides utilities to compute sentence-level and corpus-level BLEU. The most commonly used function for single-sentence evaluation is sentence_bleu, which accepts tokenized reference lists and a tokenized candidate. By default sentence_bleu returns the cumulative 4-gram (BLEU-4) score. Below are concise examples and the reasoning behind common options such as weights and smoothing.

Preparing references and candidate (tokenized)

BLEU expects lists of tokens, not raw strings. For multiple reference translations provide a list of token lists. For example:

references = [

'this is a dog'.split(),

'it is dog'.split(),

'dog it is'.split(),

'a dog, it is'.split()

]

candidate = 'it is dog'.split()Tokenization affects scores: use the same tokenizer for references and predictions and consider stripping punctuation or using a standard tokenizer for your language.

Compute sentence-level BLEU (default BLEU-4)

Calling sentence_bleu with the default weights computes the cumulative BLEU-4 score (equal weight across 1–4 grams):

from nltk.translate.bleu_score import sentence_bleu

score = sentence_bleu(references, candidate)

print(score) # e.g. 1.0 when candidate exactly matches one referenceTo spell out the BLEU-4 weights explicitly use (0.25, 0.25, 0.25, 0.25):

sentence_bleu(references, candidate, weights=(0.25, 0.25, 0.25, 0.25))Handling short sentences: smoothing

For very short candidates or when higher-order n-grams are rare, scores can be zero. NLTK includes smoothing functions to produce more informative values for such cases:

from nltk.translate.bleu_score import SmoothingFunction

smooth = SmoothingFunction().method1

sentence_bleu(references, candidate, smoothing_function=smooth)Calculate individual n-gram precision (1-gram, 2-gram, etc.)

You can request BLEU that focuses on a specific n-gram by setting weights. Examples:

# 1-gram precision

sentence_bleu(references, candidate, weights=(1,0,0,0))

# 2-gram precision

sentence_bleu(references, candidate, weights=(0,1,0,0))

# 3-gram precision

sentence_bleu(references, candidate, weights=(0,0,1,0))

# 4-gram precision

sentence_bleu(references, candidate, weights=(0,0,0,1))Corpus BLEU vs sentence BLEU and practical tips

When you evaluate a full dataset or an entire validation set, use corpus_bleu rather than averaging sentence_bleu scores. corpus_bleu computes n-gram counts across the whole corpus and applies the brevity penalty at corpus level, giving a more stable estimate for model comparisons.

- Prefer consistent tokenization for references and candidates (important for BLEU score Python comparisons).

- Use multiple references per input whenever possible; BLEU benefits from diverse references.

- Consider smoothing for short outputs or low n-gram overlap (common in image captioning).

- Interpret BLEU alongside other metrics (e.g., METEOR, ROUGE) since BLEU measures n-gram overlap and not semantic adequacy.

Complete example: calculate BLEU score Python example with reference and candidate

The snippet below shows sentence-level BLEU, BLEU-4 explicit weights, individual n-gram scores, and smoothing. It also indicates how to switch to corpus-level evaluation if you have many pairs.

from nltk.translate.bleu_score import sentence_bleu, corpus_bleu, SmoothingFunction

# Tokenized references (list of reference lists) and candidate

references = [

'this is a dog'.split(),

'it is dog'.split(),

'dog it is'.split(),

'a dog, it is'.split()

]

candidate = 'it is a dog'.split()

# Sentence-level BLEU-4 (default)

print('sentence BLEU (default):', sentence_bleu(references, candidate))

# Explicit BLEU-4 weights

print('BLEU-4 explicit weights:', sentence_bleu(references, candidate, weights=(0.25,0.25,0.25,0.25)))

# Use smoothing for short sentences

smooth = SmoothingFunction().method1

print('BLEU-4 with smoothing:', sentence_bleu(references, candidate, weights=(0.25,0.25,0.25,0.25), smoothing_function=smooth))

# Individual n-gram scores

print('1-gram score:', sentence_bleu(references, candidate, weights=(1,0,0,0)))

print('2-gram score:', sentence_bleu(references, candidate, weights=(0,1,0,0)))

# Corpus-level example (list of reference-lists for each sentence)

list_of_references = [[ref for ref in references]] # single example wrapped for corpus_bleu

hypotheses = [candidate]

print('corpus BLEU:', corpus_bleu(list_of_references, hypotheses))Conclusion

Calculating BLEU score in Python is straightforward with NLTK's utilities. Use sentence_bleu for single-sentence checks and corpus_bleu when evaluating many translations. Remember to standardize tokenization, use multiple references when possible, and apply smoothing for short outputs. BLEU-4 (weights (0.25,0.25,0.25,0.25)) is a common choice, but inspecting individual n-gram scores helps diagnose what your model captures.