Distributed Training for Trillion-Parameter Models: Advanced Scaling Strategies

Explore advanced strategies for distributed training for trillion-parameter models, including 3D parallelism, DeepSpeed, FSDP2, and RDMA networking.

Drake Nguyen

Founder · System Architect

The Need for Distributed Training for Trillion-Parameter Models

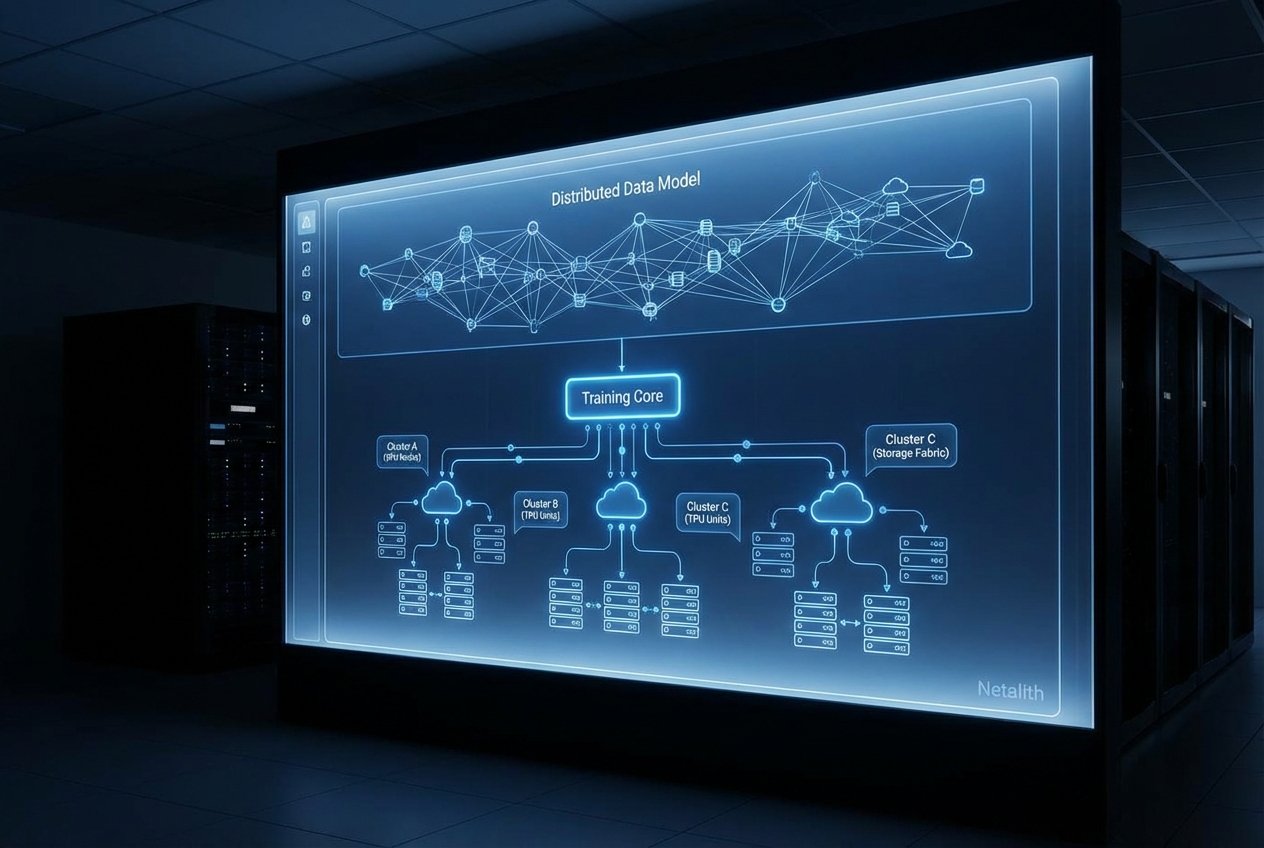

As artificial intelligence continues its aggressive trajectory toward more capable systems, the industry has firmly moved past the era of billion-parameter networks. The new frontier demands executing distributed training for trillion-parameter models, a monumental engineering challenge that renders traditional single-node setups and naive data parallelism entirely obsolete. For modern AI engineers and cloud infrastructure architects, mastering Large scale ML (machine learning) is no longer an optional skill; it is a fundamental prerequisite for building frontier models.

Deploying scalable machine learning models at this unprecedented scale requires highly sophisticated LLM optimization techniques. Training these behemoths demands thousands of synchronized GPUs, exabytes of processed data, and a flawless orchestration of hardware and software. To achieve this without incurring prohibitive compute costs or disastrous hardware utilization rates, engineering teams must implement robust Parallelized AI training. This guide breaks down the essential modern methodologies, frameworks, and networking architectures required to effectively scale deep learning workloads to the trillion-parameter mark.

Core Strategies for Parallelized AI Training

Achieving efficient Parallelized AI training requires moving beyond standard data parallelism and embracing 3D parallelism—a synergistic combination of data, pipeline, and tensor parallelism. Multi-GPU scaling at the trillion-parameter tier is exceptionally sensitive to GPU memory limits, meaning that an entire model cannot be loaded onto a single accelerator.

To maximize hardware utilization, engineers rely heavily on gradient accumulation at scale. By breaking down global batch sizes into smaller micro-batches, clusters can execute forward and backward passes without triggering Out-Of-Memory (OOM) errors, accumulating gradients over time before executing the optimizer step. This is foundational for keeping accelerator utilization high across sprawling compute fabrics.

Pipeline Parallelism for Transformers Guide

At the core of horizontal scaling is the division of network layers across different compute nodes. In any comprehensive pipeline parallelism for transformers guide, the first priority is minimizing the "pipeline bubble"—the idle time where GPUs wait for activations or gradients from adjacent stages.

Current high-performance setups have superseded standard sequential pipelining with advanced model sharding techniques, such as interleaved 1F1B (One Forward, One Backward) scheduling. By assigning multiple, smaller chunks of transformer layers to each device, the pipeline bubble is drastically reduced. This is a critical component of Distributed training, ensuring that memory consumption is distributed evenly while keeping throughput high.

Tensor Parallelism at Scale

While pipeline parallelism splits the model by layers, tensor parallelism splits the individual layers themselves. Embracing tensor parallelism at scale is mandatory because the weight matrices within the modern Transformer architecture easily exceed the High Bandwidth Memory (HBM) limits of a single accelerator.

By sharding specific operations—primarily the Multi-Head Attention (MHA) and Feed-Forward Network (FFN) blocks—across multiple GPUs, developers can dramatically improve self-attention mechanism efficiency. During the matrix multiplication phase, GPUs compute their respective shards and synchronize via fast interconnects (such as NVLink) using All-Reduce operations. This intra-layer sharding minimizes memory bottlenecks and accelerates the forward and backward passes at the micro-level.

Optimizing with Next-Gen Frameworks: DeepSpeed and FSDP2

The hardware strategies above must be seamlessly orchestrated by the software layer. Effectively executing Parallelized AI training using modern deep learning frameworks means relying on heavily optimized orchestration libraries. Two of the most dominant paradigms are DeepSpeed and PyTorch's Fully Sharded Data Parallel version 2 (FSDP2).

Reviewing modern DeepSpeed features reveals hyper-optimized memory managers tailored for massive clusters. Central to this are the ZeRO redundancy optimizer advancements. Specifically, ZeRO Stage 3 (and its newer iterations) completely partitions optimizer states, gradients, and model parameters across the data-parallel ranks. Combined with NVMe offloading, DeepSpeed allows clusters to page memory in and out dynamically, preventing bottlenecks when dealing with multi-trillion parameters.

Simultaneously, the FSDP2 implementation for transformers represents the peak of distributed data parallel DDP evolution. FSDP2 abstracts away the complexity of explicit tensor sharding by treating the entire cluster as a single unified memory pool. It optimally pre-fetches parameters just-in-time for forward passes and discards them immediately after the backward pass, delivering granular memory efficiency and making the compilation of a trillion-parameter model highly streamlined.

Solving Inter-node Communication Bottlenecks in AI Clusters

Even with optimal sharding, network latency can throttle cluster performance. Successful Cluster-based deep learning relies heavily on continuous data synchronization. As cluster sizes scale into the tens of thousands of GPUs, mitigating inter-node communication bottlenecks becomes just as critical as optimizing the code itself.

To keep the communication overhead from dominating the step time, engineers must prioritize RDMA networking for AI clusters. Remote Direct Memory Access (RDMA) bypasses the CPU entirely, allowing GPUs on different nodes to read and write directly to each other's memory.

- InfiniBand Interconnects: The industry standard for non-blocking, high-bandwidth communication, ensuring ultra-low latency for All-Reduce and All-Gather operations.

- RoCE v2 (RDMA over Converged Ethernet): A highly viable, cost-effective alternative that routes RDMA packets over standard Ethernet switches, utilizing Priority Flow Control (PFC) to prevent packet loss.

- Topology Awareness: Implementing advanced topologies, like non-blocking fat-tree or multi-dimensional torus configurations, ensures that the physical network layout matches the logical communication patterns of 3D parallelism.

"The success of a trillion-parameter run is rarely decided by compute alone; it is almost entirely dictated by the cluster's ability to communicate efficiently over the network."

Conclusion: The Future of Distributed Training for Trillion-Parameter Models

The relentless progression of neural network scaling laws dictates that the appetite for larger, more capable architectures will not slow down. Staying ahead requires a deep commitment to cloud-native AI development and the continual adoption of advanced deep learning frameworks. By combining pipeline and tensor parallelism, leveraging DeepSpeed and FSDP2, and architecting zero-latency RDMA networks, organizations can successfully conquer the complexities of modern machine learning.

Ultimately, mastering distributed training for trillion-parameter models is the defining technical milestone for the current generation of AI development. As the gap between compute capacity and model size continues to evolve, using these foundational orchestration techniques will empower AI engineers to build the next generation of highly capable, generalized artificial intelligence.