Python Dictionary Implementation Details: Hash Maps and Performance Guide

Deep dive into Python dictionary implementation details. Learn about hash maps, internal mechanics, collision resolution, and Big O performance in CPython.

Drake Nguyen

Founder · System Architect

If you are exploring a comprehensive Python data structures guide, mastering the dictionary is perhaps your most crucial step. Dictionaries are the workhorses of the Python language, underpinning everything from global namespaces to class attributes. However, many developers use them daily without truly grasping the Python dictionary implementation details. Understanding how these data structures function beneath the surface separates an average programmer from an exceptional software engineer, especially when preparing for rigorous python coding interview questions.

In this guide, we will unpack how dictionaries achieve their lightning-fast lookups, how they allocate memory, and how they resolve conflicts. By exploring these hash table python guide, you will learn to write more efficient, performant code that scales effortlessly.

What is a Hash Map in Python?

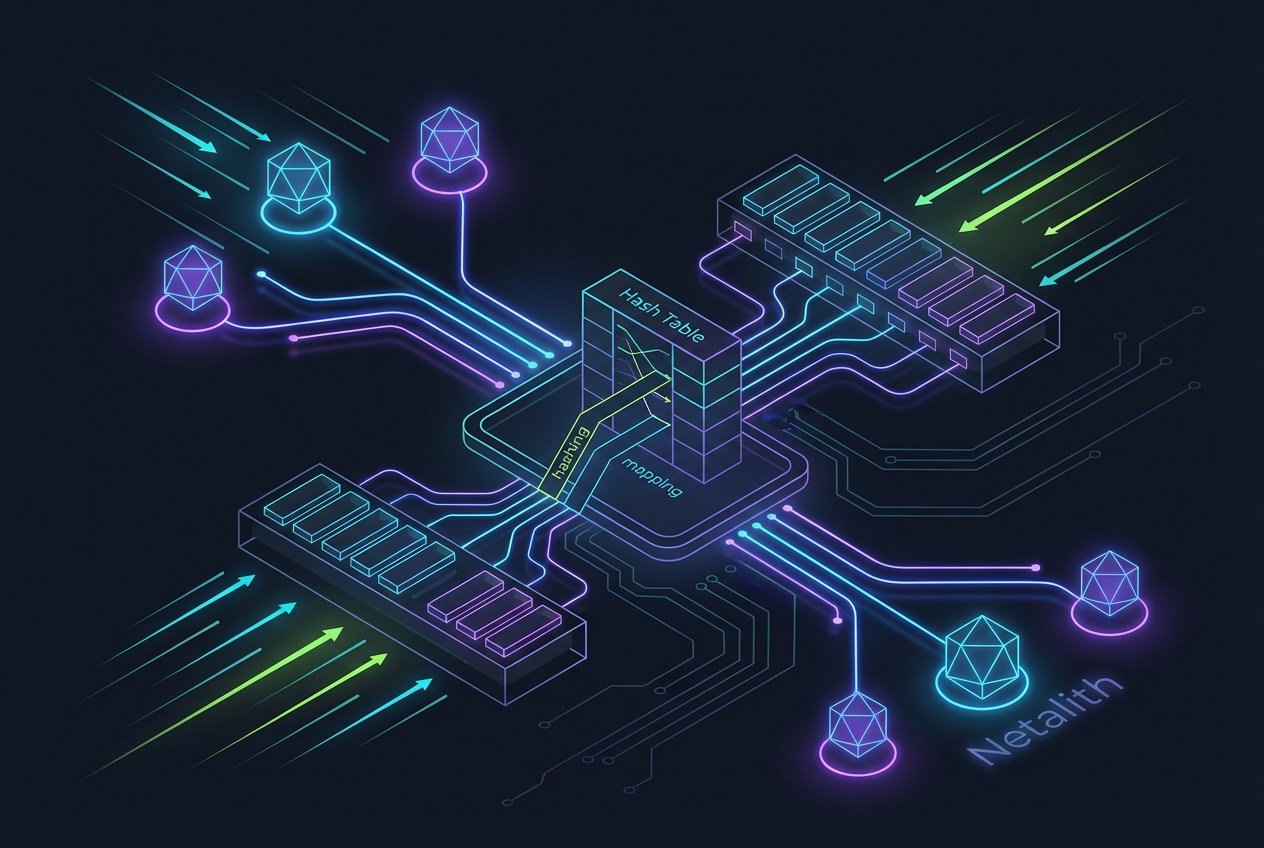

Before diving into CPython's specific architecture, we need to understand the fundamental concept of a Python Hash Map. In computer science, associative arrays or hash tables are abstract data types designed to store data in key-value pairs. A Python dictionary is essentially a highly optimized implementation of a hash table.

If you are looking for a definitive hash table python guide, the key takeaway is that a hash map uses a mathematical formula to compute an index into an array of buckets or slots, from which the desired value can be found. Unlike a python linked list implementation where you must traverse elements sequentially, or traditional searching algorithms python requires for lists, a hash map enables direct access to the required data.

"At its core, a Python dictionary provides a robust interface over underlying hash tables, optimizing for rapid key lookups, insertions, and deletions."

Python Dictionary Implementation Details: How They Work Under the Hood

Modern CPython introduced significant changes to Python Dict Internals, and these optimizations have become the standard in modern development environments. If you want to know exactly how python dictionaries work under the hood hash maps explained simply, it comes down to a split-memory architecture.

Historically, dictionaries stored their hash, key, and value together in a sparse array. Today, the internal mechanics of python dict rely on two separate arrays:

- A dense array: Stores the actual key-value pairs and their computed hashes in the exact order they were inserted.

- A sparse array (indices array): Acts as the actual hash table. It stores integers that point to the indices in the dense array.

This brilliant architectural shift not only reduced memory overhead by 20% to 25% but also gave developers a highly sought-after feature: dictionaries that naturally maintain insertion order. Delving into these hash table python guide reveals how language designers constantly balance speed with memory efficiency.

The Role of Hash Functions in Mapping Keys

Every reliable python hash map implementation tutorial begins with the hashing process. When you insert key-value pairs into a dictionary, Python relies on hash functions to convert the key into a fixed-size integer. This integer is then processed (usually via a bitwise AND operation with the current table size minus one) to find a specific index in the sparse array.

# A simplified look at hashing

my_dict = {}

key = "server_host"

value = "127.0.0.1"

# Python computes the hash of the key

hash_value = hash(key)

print(hash_value) # Output: an integer like 894562187654...

Because the dictionary relies on this mechanism, all keys must be immutable (like strings, integers, or tuples). Mutable objects like lists cannot be hashed, as a change in their state would change their hash value, effectively losing them inside the data structure.

Memory Allocation and the Load Factor

As you add more key-value pairs, the dictionary inevitably fills up. The metric that dictates when the dictionary must grow is called the load factor python utilizes. Standard dictionary internals dictate that when the hash table is two-thirds (66.6%) full, Python dynamically resizes it.

This proactive resizing allocates a new, larger sparse array and reorganizes the pointers. Unlike dynamic programming python strategies where you cache subsets of problems, here Python pre-allocates memory space to ensure future operations remain fast, preserving the underlying time complexity.

Handling Hash Collisions in Python Dict

A hash collision occurs when two distinct keys yield the same index in the sparse array. Because no hash function is perfect and the array size is finite, handling hash collisions in python dict is a critical part of the internal mechanics of python dict.

Many languages use a technique called "chaining" (creating a linked list at the collision index). Python, however, uses a form of collision resolution called open addressing with pseudo-random probing.

When a collision happens, Python's probing algorithm generates a new, pseudo-random index based on the original hash and a recurring mathematical recurrence relation. It continues to probe the sparse array until it finds an empty slot. This approach ensures excellent cache locality, preventing the memory fragmentation often seen in linked-list-based collision resolution.

Dictionary Performance Big O Python

When analyzing the dictionary performance big o python capabilities, we see why dictionaries are favored for tasks ranging from memoization to large-scale data aggregation. Due to the efficient hashing and indexing mechanics, dictionary lookups, insertions, and deletions operate in amortized constant time, or O(1).

In the context of a Big O notation python tutorial, "amortized" means that while an occasional operation might be slow (like when the dictionary reaches its load factor and must resize, taking O(n) time), the vast majority of operations are instant. When compared to a stack and queue python setup or sorting algorithms in python, dictionaries provide unmatched retrieval speed.

- Average Case (Lookup/Insert/Delete):

O(1) - Worst Case (Heavy Collisions/Resizing):

O(n) - Iteration:

O(n)

Current research into dictionary internals confirms that modern CPython versions have heavily optimized the probing and resizing sequences, making that worst-case O(n) scenario exceedingly rare under normal workloads.

Conclusion: Mastering Python Dicts for Interviews

From understanding open addressing to appreciating the split-memory architecture, digging into Python dictionary implementation details equips you with a profound understanding of backend performance. By knowing how hash functions and load factors dictate memory usage, you can predict how your applications will behave under heavy data loads.

Whether you are implementing custom data processing scripts or gearing up for system design interviews, leveraging this knowledge of Python dictionary implementation details allows you to utilize dictionaries to their absolute fullest potential.

Frequently Asked Questions

What is the time complexity of a Python dictionary?

The average time complexity for lookups, insertions, and deletions in a Python dictionary is amortized constant time, or O(1). This makes it one of the most efficient data structures for rapid data retrieval.

Why must Python dictionary keys be hashable?

Keys must be hashable (immutable) because the dictionary uses the hash value to determine the storage location. If a key's value changed, its hash would change, making it impossible for the Python Hash Map to locate the associated value. In summary, a strong Python dictionary implementation details strategy should stay useful long after publication.